Data Engineering Insights

Introduction to Modern Data Pipelines: Key Concepts and Architecture

Welcome to Data Engineering Insights!

Hi everyone, and welcome to the first edition of Data Engineering Insights! I’m thrilled to kick off this journey with you as we dive deep into the core concepts, best practices, and cutting-edge technologies shaping the world of data engineering. My goal is to create a place where data professionals, whether beginners or experts, can come together to explore the latest ideas and practical advice in the field.

Why Data Pipelines Matter

In today’s data-driven world, companies rely on data to make strategic decisions, improve customer experience, and even drive innovation. But, raw data isn’t inherently valuable. For data to be actionable, it needs to be collected, processed, and stored in a usable format. That’s where data pipelines come in.

Data pipelines form the backbone of the modern data infrastructure, enabling us to move data from various sources to a destination where it can be analyzed, all while maintaining quality, security, and reliability.

What is a Data Pipeline?

Simply put, a data pipeline is a series of processes that moves data from one place to another, transforming it along the way. Data pipelines can be used for tasks such as:

Data ingestion: Collecting data from diverse sources (like databases, applications, IoT devices).

Data transformation: Cleaning and structuring data to make it usable.

Data storage: Saving data in warehouses or lakes for easy access.

Data visualization: Presenting data insights in a dashboard or report format.

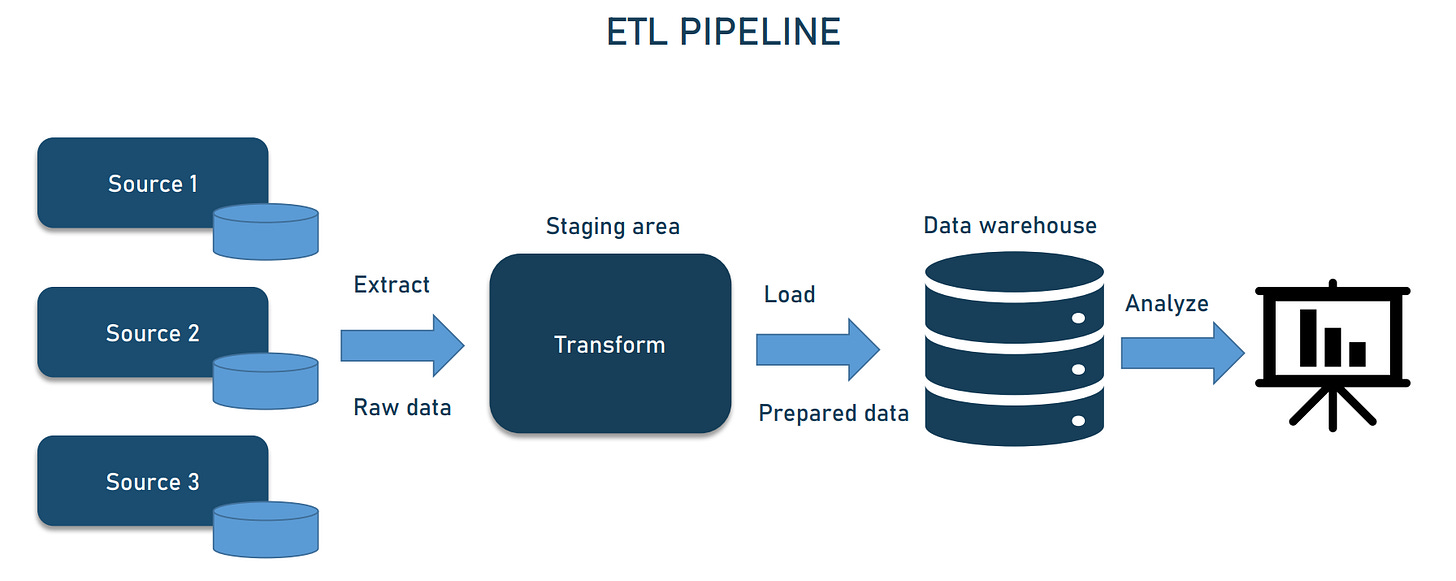

Core Components of a Modern Data Pipeline

To understand a modern data pipeline, let’s break down its essential components:

Ingestion

The first step involves gathering data from various sources. Ingestion can be either batch (processing data at scheduled intervals) or real-time (processing data as it arrives). Tools like Apache Kafka and AWS Kinesis are popular for real-time ingestion, while Apache Nifi and Airflow are commonly used for batch processing.Transformation

After ingestion, data often needs cleaning, normalization, or enrichment. This is the transformation step, where tools like Apache Spark or cloud-based solutions such as GCP Dataflow help you structure the data to suit your analytics needs.Storage

Next, the data is stored in a warehouse (like Snowflake or BigQuery) or a lake (like Amazon S3 or Google Cloud Storage). The choice between a warehouse and a lake depends on the data type and analysis requirements.Visualization and Analysis

Finally, data needs to be visualized or analyzed. This step involves BI tools like Tableau, Power BI, or custom dashboards that enable stakeholders to draw insights and make informed decisions.

Key Architecture Considerations

As we design and build a data pipeline, a few architectural decisions come into play:

Batch vs. Real-time Processing: Real-time pipelines are ideal for time-sensitive data, while batch processing can be more cost-effective for less time-critical data.

Scalability: Can the pipeline handle increased data volumes without performance degradation? Cloud-based solutions offer flexible scaling options.

Fault Tolerance: In case of failure, the pipeline should be able to resume processing without data loss. This is often achieved using techniques like checkpointing or building redundant data flows.

Popular Tools and Technologies

The data engineering ecosystem is rich with tools tailored for different stages of the pipeline:

Ingestion: Apache Kafka, AWS Kinesis, Apache Nifi

Transformation: Apache Spark, Databricks, GCP Dataflow

Storage: Snowflake, BigQuery, AWS S3

Visualization: Tableau, Power BI, Looker

Choosing the right tool depends on factors such as the data type, required processing speed, scalability needs, and budget.

Future of Data Pipelines

Looking forward, several trends are shaping the future of data pipelines:

Serverless Architectures: As cloud providers optimize serverless options, data engineers can focus more on logic and less on infrastructure.

Data Mesh: Data ownership and decentralized data management are growing in importance as organizations strive for flexibility and agility.

Integration of AI: AI-driven transformations are becoming more common, automating data quality checks, anomaly detection, and even optimization.

Stay Tuned!

This was a quick overview of the components and considerations of modern data pipelines. In upcoming issues, I’ll break down each stage in detail. Next week, we’ll dive deeper into Data Ingestion Methods and Tools and explore how to choose the right ingestion strategy based on your data requirements and business goals.

I hope you found this introduction helpful! As always, I’d love to hear your feedback, questions, or topics you’d like to see covered. Let’s make data engineering accessible and engaging, one newsletter at a time.

Best,

Avantika