The 30-Day DE Roadmap: Your Fast-Track to a $150K Job : How to Think Like a Data Engineer

Learn the Right Skills. Skip the Random Tools. Most people learn the wrong things. Here is what actually matters.

Welcome back to Zero2DataEngineer.

New here? 👋 Hi. I am Avantika.

I spent 5 years at Meta building data systems for ARVR -Reality Labs, Marketplace and Consumer Connectivity. I then moved on to build platforms for Walmart and Marriott, processing half a billion events a day. Yes, half a billion. Before my morning coffee.

But I have also been the person who Googled “what is a slowly changing dimension” at 11pm the night before an interview. Zero connections in tech. Broke into MAANG the hard way. Both versions of me write this newsletter — the one who learned everything the hard way, and the one who finally knows enough to make it easier for you.

Let me tell you what nobody says out loud……

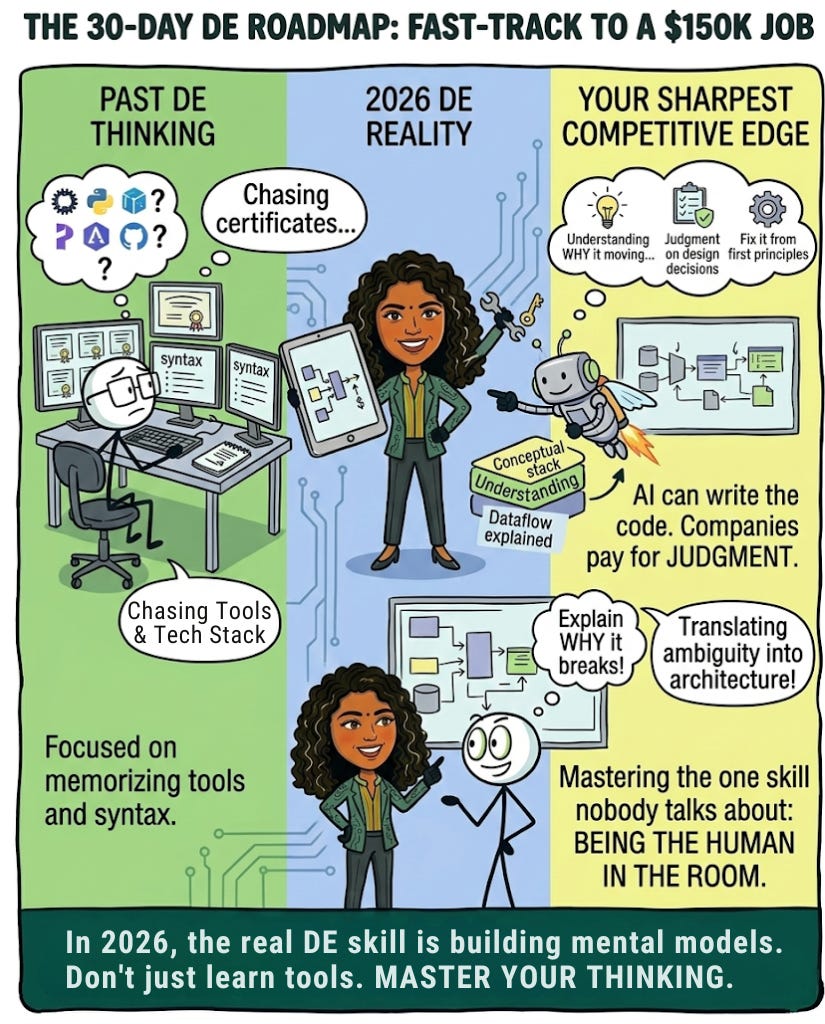

Most engineers who fail to break into Data Engineering did not fail because they lacked talent. They failed because they spent months learning tools instead of learning how to think. They memorized syntax without understanding the problem the syntax was solving. They chased certificates while the engineers getting hired were building things.

In 2026, the bar has shifted. Companies are not impressed by a list of tools on your resume. They are impressed by engineers who understand data at a conceptual level, write clean purposeful code, and can walk into a system they have never seen before and figure it out.

Because honestly, when AI can write the code in seconds, memorizing syntax is no longer your competitive advantage. What AI cannot do is understand why a pipeline is failing at 3am, make a judgment call on a schema design that has to last five years, or walk into a broken system and reason through it from first principles. That is what companies are paying $150K for in 2026. Not your ability to remember a function name. Your ability to think.

This roadmap is not about tools. It is about building the mental models that make everything else click.

Did you know most Data Engineers who land $150K roles cannot list every feature of every tool they use? But they can explain exactly why data moves the way it does, where it breaks, and how to fix it. That is the real skill.

And the engineers who move into staff and principal and manager roles? They are almost always the most technical people in the room. But not because they memorized the most.

Because they mastered the one skill nobody talks about enough: being the human in the room. The judgment. The context. The ability to sit across from a stakeholder and translate ambiguity into architecture. The instinct that comes from having actually broken things and fixed them.

In the fastest moving AI era we have ever seen, that is not a soft skill. That is your sharpest competitive edge.

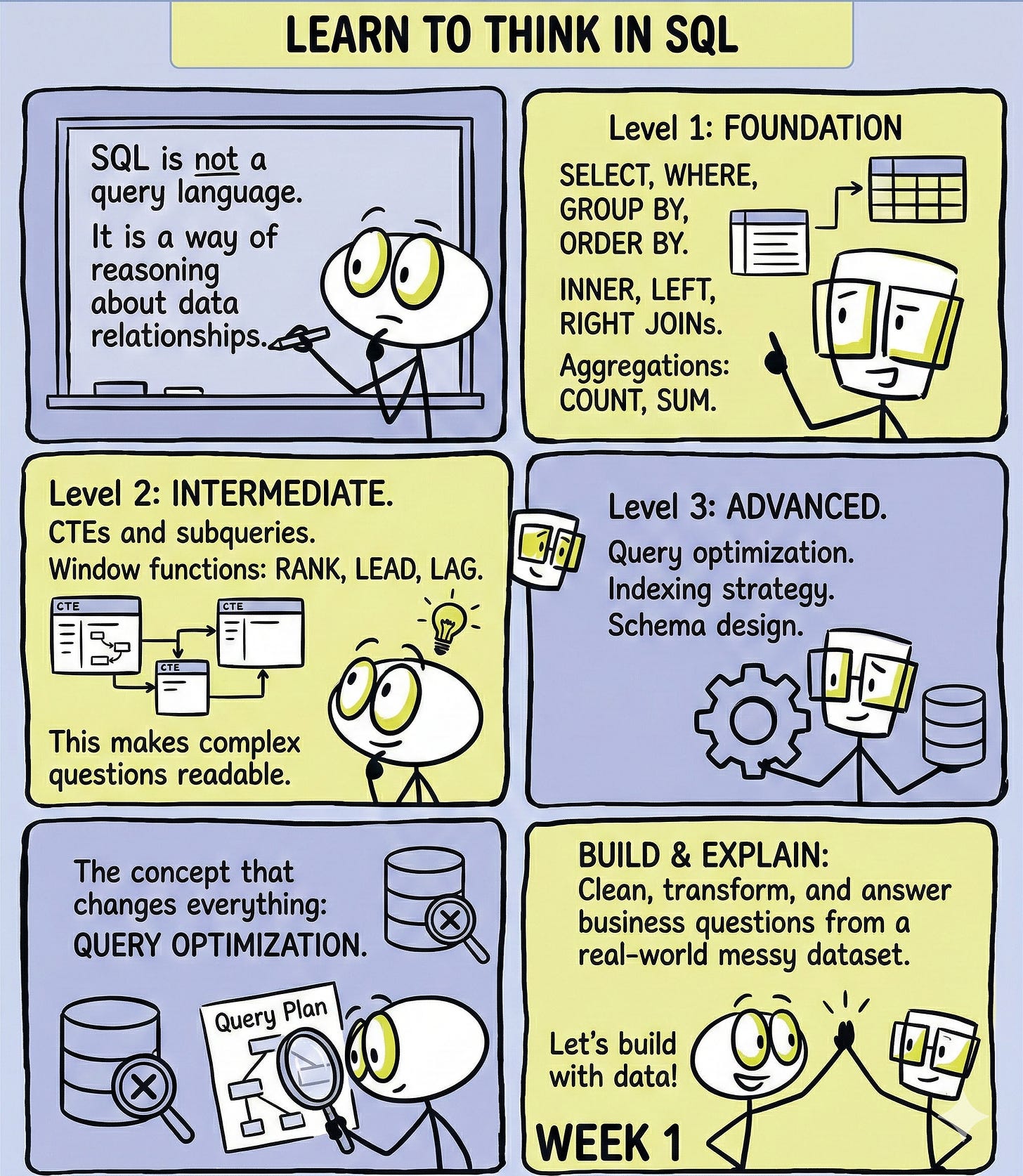

Week 1: Learn to Think in SQL

SQL is not a query language. It is a way of reasoning about data relationships.

Before you write a single line of code, understand what you are actually asking the database to do. Every JOIN is a question about how two sets of information relate to each other. Every window function is a question about context, how does this row relate to the rows around it? Every CTE is a way of breaking a complex question into smaller, readable pieces.

Level 1: Foundation How data is stored and why. SELECT, WHERE, GROUP BY, ORDER BY. INNER, LEFT, RIGHT and FULL JOINs. Aggregations: COUNT, SUM, AVG, MIN, MAX. Filtering with HAVING versus WHERE and why the difference matters.

Level 2: Intermediate CTEs and subqueries. When to use one over the other and why it affects readability. Window functions: ROW_NUMBER, RANK, DENSE_RANK, LAG, LEAD. Running totals and moving averages. Multi-table joins and handling NULL values intentionally.

Level 3: Advanced Query optimization. How a database engine reads your query, what an execution plan actually tells you, and where queries die. Indexing strategy and when indexes hurt more than they help. Writing SQL for ETL transformations, not just reporting. Schema design decisions and how they affect every query written against them.

What to build: take one messy real-world dataset, something with missing values, duplicates, and inconsistent formats, and write SQL that cleans it, transforms it, and answers three business questions from it. Do not move on until you can explain every line of code out loud.

The concept that changes everything: query optimization. Understanding why a query is slow teaches you more about how databases actually work than any course ever will.

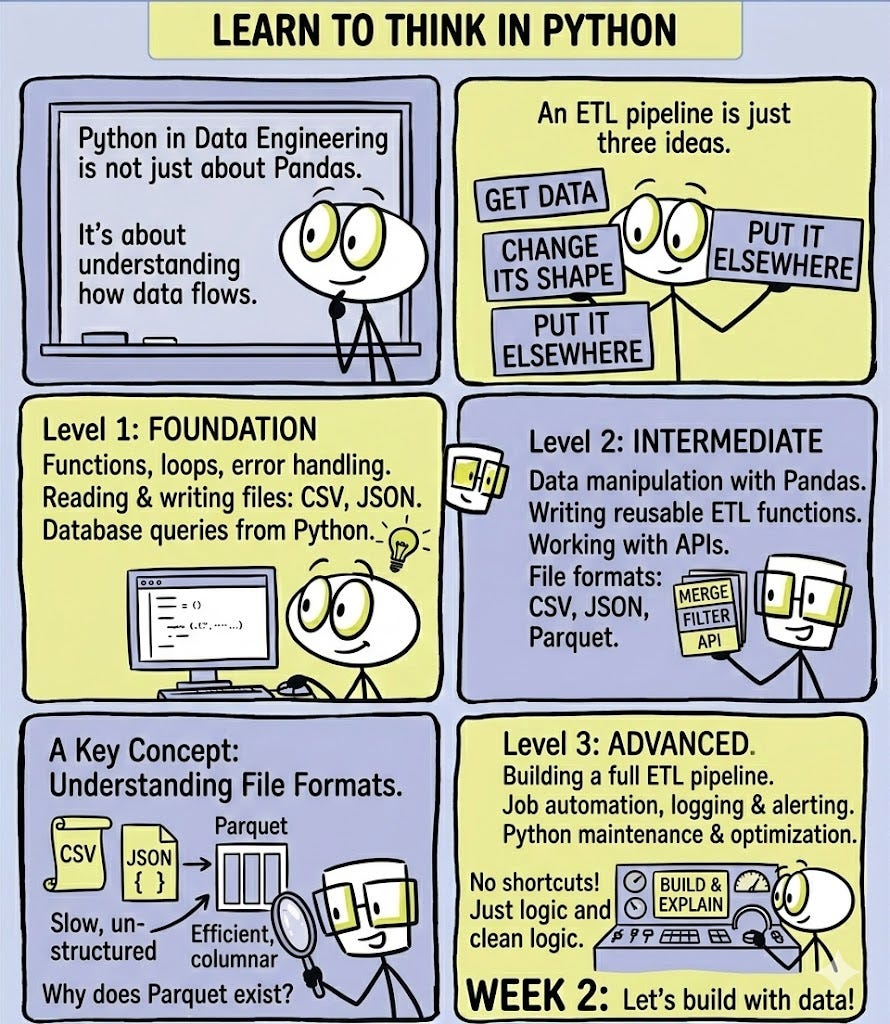

Week 2: Learn to Think in Python

Python in Data Engineering is not about knowing Pandas. It is about understanding how data flows through a system.

An ETL pipeline is just three ideas: get data from somewhere, change its shape, put it somewhere else. Everything else is implementation detail. Once that concept clicks, the code almost writes itself.

Level 1: Foundation Python data types and when to use each one. Reading and writing files: CSV, JSON, TXT. Functions, loops, and error handling. Connecting to a database and running a query from Python.

Level 2: Intermediate Data manipulation with Pandas. Merging, reshaping, filtering and cleaning dataframes. Writing reusable ETL functions. Working with APIs and parsing responses. File format differences: CSV versus JSON versus Parquet and when each one is the right choice.

Level 3: Advanced Building a full ETL pipeline from scratch. Scheduling and automating jobs. Handling failures gracefully with logging and alerting. Writing Python that a team can maintain, not just code that runs once. Performance optimization when your dataset stops fitting in memory.

What to build: a Python script that pulls data from a public API, transforms it into a clean structured format, and loads it into a local database. No frameworks. No shortcuts. Just you, the data, and the logic.

The concept that changes everything: understanding file formats. Why does Parquet exist? What problem does it solve that CSV does not? Engineers who understand the why behind format choices make better decisions at every level of a system.

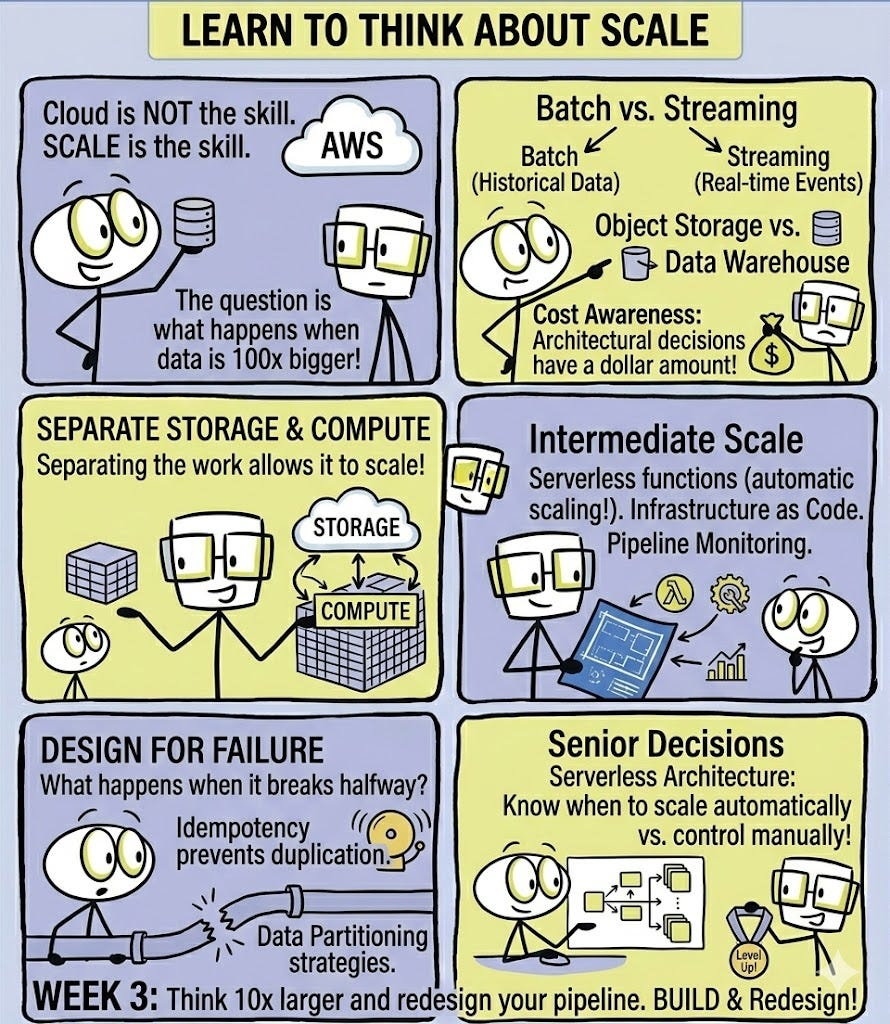

Week 3: Learn to Think About Scale

Here is where most people get confused. Cloud platforms are not the skill. Scale is the skill.

The question is never “how do I use AWS?” The question is “what happens to my pipeline when the data is 100 times bigger than it is today?” Cloud platforms are just where you go to find out.

Level 1: Foundation What cloud infrastructure actually is and why it exists. Object storage versus databases versus data warehouses. The difference between batch processing and streaming and when each one is the right choice. Cost awareness: why architectural decisions have a dollar amount attached to them.

Level 2: Intermediate Designing pipelines that separate storage from compute. Serverless functions and when to use them. Infrastructure as code: why writing infrastructure in code is the same discipline as writing application code. Monitoring pipelines and knowing when something is wrong before users tell you.

Level 3: Advanced Designing for failure. What happens when a pipeline breaks halfway through and how idempotency protects you. Data partitioning strategies and how they affect query performance downstream. The tradeoffs between real-time and near-real-time architectures and the cost of each.

What to build: take the ETL pipeline from the previous section and think through what would break at 10x the data volume. Then redesign it to handle that load. The redesign does not need to be deployed anywhere. The thinking is the exercise.

The concept that changes everything: serverless architecture. Understanding when to let infrastructure scale automatically versus when to control it manually is a decision every senior engineer makes constantly.

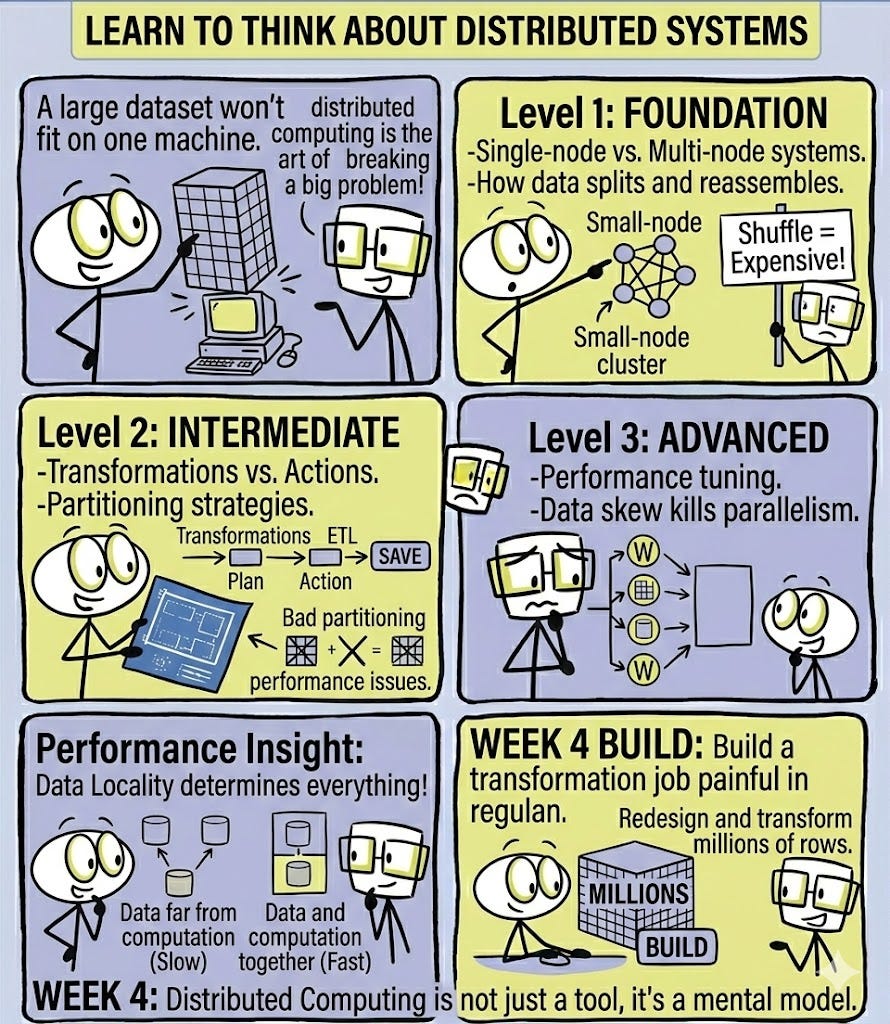

Week 4: Learn to Think About Distributed Systems

Apache Spark is not a tool you learn. It is a mental model you develop.

The core idea: some problems are too big for one machine. Distributed computing is the art of breaking a big problem into smaller problems, solving them in parallel, and assembling the results. Once that concept is clear, the code is just syntax.

Level 1: Foundation Why distributed computing exists and what problems it actually solves. The difference between a single-node and a multi-node system. How data gets split across machines and reassembled. What a shuffle is and why it is the most expensive operation in distributed computing.

Level 2: Intermediate DataFrames in a distributed context versus a single-machine context. Transformations versus actions and why the distinction changes how you write code. Partitioning strategies and how the wrong one quietly destroys performance. Reading and writing large datasets efficiently.

Level 3: Advanced Performance tuning from first principles. Understanding data skew and why it kills parallel execution. Caching strategy and when holding data in memory helps versus hurts. Debugging a distributed job that fails silently on only some partitions. Designing pipelines that stay performant as data volumes grow unpredictably.

What to build: take a large dataset, at least a few million rows, and write a transformation job that would be painfully slow in regular Python. Then think through how distributing that work across multiple machines changes the execution. Understand the tradeoffs between partitioning strategies before worrying about which cluster to run it on.

The concept that changes everything: data locality. Where the data lives relative to where the computation happens determines everything about performance. This is the insight that separates engineers who tune systems from engineers who just run them.

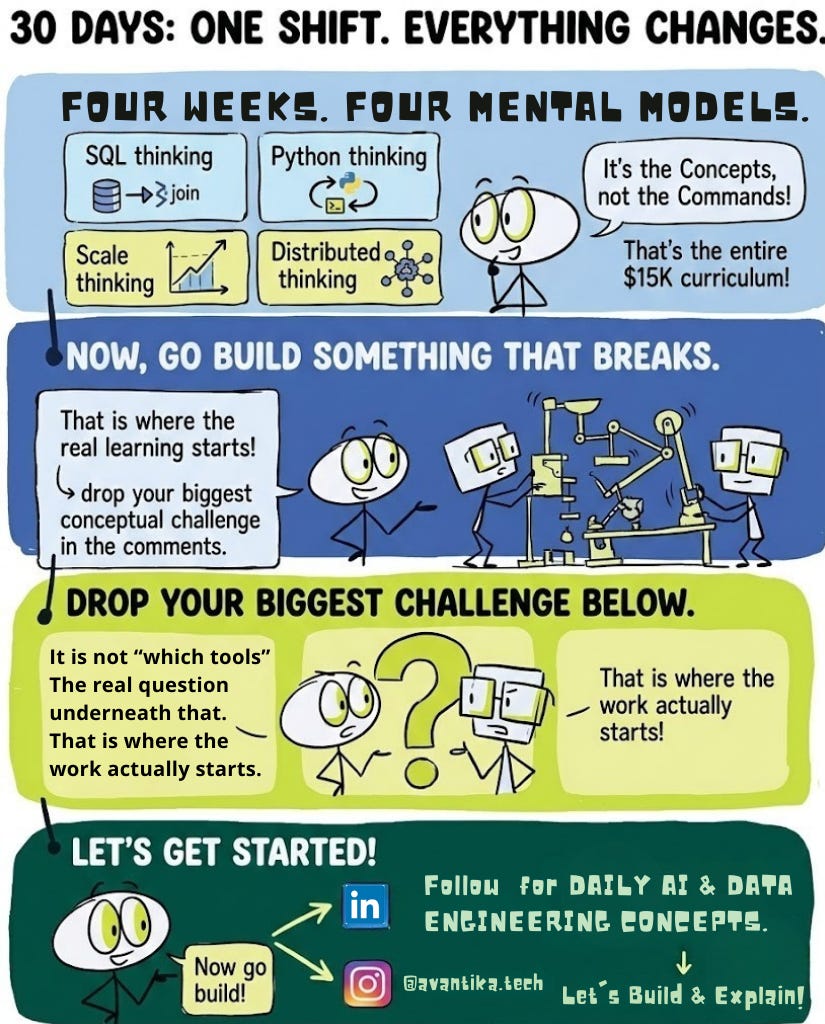

Four Weeks. One Shift. Everything Changes.

Four mental models. SQL thinking, Python thinking, scale thinking, distributed thinking. That is the entire curriculum of a $15K bootcamp. You just got it in 30 days by understanding the concepts not memorizing the commands.

Now go build something that breaks. That is where the real learning starts.

Drop your biggest conceptual challenge in the comments. Not “I do not know which tool to learn.” The real question underneath that. That is where the work actually starts.

That is all for today.

If this helped, forward it to a friend or colleague who is figuring out their next move in tech. This newsletter costs less than your daily coffee and it might be the thing that gets them unstuck.

Follow me on LinkedIn and Instagram @avantika.tech for daily AI and Data Engineering content.

See you tomorrow.

— Avantika Penumarty

Thank you Avantikka for the roadmap! Definitely helps people like me who are trying to get back into the industry after a career gap! Really been enjoying all your posts and the SQL challenges!

Saw it on LinkedIn. Looks like you underestimated your popularity 😆😆

Thank you for the roadmap ☺️